|

The idea is to build a layer that outputs a linear combination of a linear function and a Heaviside function as the following equation: output=w_s*s(input)+w_h*H(input)+b, where S and H are the linear function and the Heaviside function, w_s, w_h, b are trainable parameters.

To obtain the minimum with a nice gradient and capture the jump discontinuity at the same time, we now try to construct a “well jump model” that takes advantage of both the classic method and the one with a Heaviside activation.

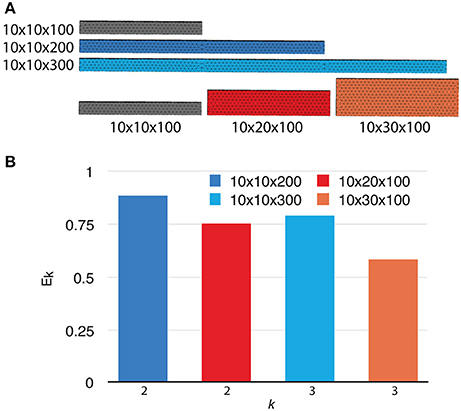

As the gradient-based method fails to reach the desired minimum, the learned function stays piece-wisely on little plateaus. The reason for such behavior comes from the fact that the minimizer of the loss function is searched by calculating the gradients while the derivative of a Heaviside function is a Dirac distribution whose value cannot be defined everywhere on the real axis. Image by author: original function vs learned function with Heaviside activation After 20 training epochs, we observe the model indeed captures the jump discontinuity but has no ability at all to predict the smooth function part. We now build a model with the same structure as before and the only change is that the activation function of the output layer is set to be the Heaviside function just we defined. def heaviside(x): ones = tf.ones(tf.shape(x), dtype=x.dtype.base_dtype) zeros = tf.zeros(tf.shape(x), dtype=x.dtype.base_dtype) def grad(dy): return dy*0 return (x > 0.5, ones, zeros), grad

Note that since the derivative of the Heaviside function is the Dirac distribution which is not defined everywhere, we simply set the gradient to be 0. We can define the Heaviside function with Keras in the following way. The Heaviside function, also say the unit step function, usually denoted by H or θ, is a step function whose zero for negative arguments and one for positive arguments. Therefore, a natural idea is to set the activation function of the output layer as the Heaviside function. The result is not surprising: the activation functions of all layers are continuous and they regularize the original curve. Image by author: original function vs learned function The network with Heaviside activation The figure below illustrates the architecture of the model.

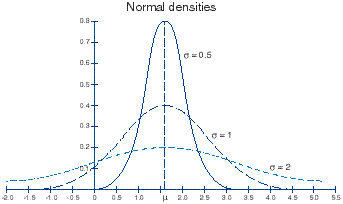

The output layer has a simple enough linear activation function. We first build a simple, classic neural network that contains three dense layers such that the two hidden layers have both 32 units. Let us start by looking at a simple piece-wise function with only one jump discontinuity: the function equals sin(x) when x is positive and sin(x) otherwise, as is shown at the beginning of this article. Neural networks failed to jump well The classic network In this article, I will be showing how to build a specific block that can capture the jump discontinuity and use the network to treat a partial differential equation (PDE) problem. As is said by the universal approximation theorem, in the mathematical theory of artificial neural networks, a feed-forward network with a single hidden layer containing a finite number of neurons can approximate continuous functions on compact subsets in a finite-dimensional real space, under mild assumptions on the activation function.” However, learning functions with jump discontinuities is hard for a deep neural network as the error around the point where the value jumps are usually large. Jump discontinuities in a function are formed when all the one-sided limits exist and are finite, but are or not equal and you will usually encounter them in piece-wise functions for which different parts of domains are defined by different continuous functions.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed